|

8/15/2023 0 Comments Sophokeys windows

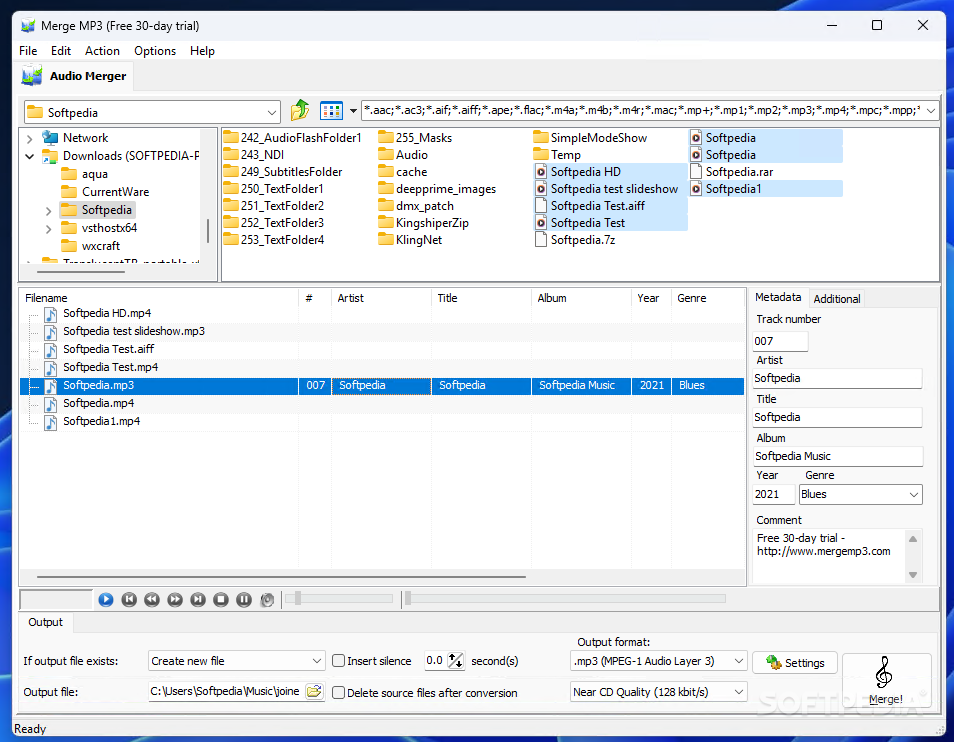

For instance, by pressing the l key, a λ would appear. By all appearances, this seemed to imitate what had been done in the past, where printers would swap out and mix sets of lead blocks. With these customized fonts, when a Latin letter was typed, what appeared was a Greek letter assigned its place.

Most often, the way to type non-Latin alphabets was simply to work with a customized font. Thus, in the 1980s and 1990s anyone who wanted to use computers to work with Greek or other alphabets had to invent creative ways of getting around the Latin alphabet. Both PC and Macintosh upper character sets (spaces 128–255) assigned some slots to Greek letters, but these were intended to serve mathematicians, not users of modern or classical Greek. Language was an issue too. The character sets computers used through the mid-1990s catered almost exclusively to the Latin alphabet. The two systems were developed independently, so texts using the upper 128 characters on one system could not be read on the other. Both IBM and Apple developed a 256-character set, populating the extra places with characters absent in ASCII. But these systems transmitted information in packets of 8 bits, so 256 (= 2 8) was the natural size for the code table. IBM and Apple made ASCII the basis for the character sets of their new computers. The size was chosen for its efficiency and its compatibility with binary systems (128 = 2 7). Thus was born in the 1960s the American Standard Code for Information Interchange (ASCII), a 128-character code that included upper and lowercase letters, the digits, standard punctuation, and commonly used command codes. The proliferation of different coding systems compelled the International Organization of Standards (ISO) to develop a single standard for coding telecommunications. Small character sets were cost effective, reducing the number of dots and dashes, and therefore the time, needed to encode, transmit, and decode a message.Īs technology developed, new communication devices introduced more expansive code tables, ones that included lowercase letters, punctuation, and commands (such as "start new line" or "end of transmission"), but were frequently inconsistent with the code tables of other devices. All the earliest telegraph codes possessed a limited number of characters. International Morse Code, for instance, has only fifty-one characters: the twenty-six letters of the English alphabet (assumed to be uppercase), the ten digits, and fifteen signs of punctuation. The initial codes consisted of a limited set of characters.

These code tables were the prototypes of the code tables used in computers. (The audio component-the clicks and clacks we associate with telegraphs-came later.) To interpret the message it was necessary that both sender and recipient use the same code, so the pulses could be converted to letters or numbers. As the voltage changed, either a pen wiggled across the paper, or a set of needles or stamps punctured or indented it, creating a visual pattern representing the message. The earliest versions transmitted and received electronic pulses, which were transcribed onto long paper strips. To fully appreciate Unicode it helps to start with the telegraph. Suggestions for further reading can be found at the end of this guide. It is aimed at anyone new to Unicode or anyone working with non-Unicode fonts (Greek or not). This policy applies to the submission of text in any language, not simply polytonic Greek. This introductory guide explains, with particular reference to the Greek language, the development and architecture of Unicode, methods for working with Unicode, and related issues. All authors publishing with Dumbarton Oaks must submit text that is Unicode compliant.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed